Pentagon planning joint development efforts for ‘multimodal’ generative AI

The Department of Defense wants to acquire generative artificial intelligence capabilities such as large language models. But don’t expect the Pentagon to buy it off the shelf or rely on industry to provide solutions, according to a senior DOD official.

Generative AI has gone viral in recent months with the emergence of ChatGPT and other tools that can generate content — such as text, audio, code, images, videos and other types of media — based on prompts and the data they’re trained on.

However, the Pentagon doesn’t trust the commercial products that are currently on the market, which have been known to “hallucinate” and provide inaccurate information.

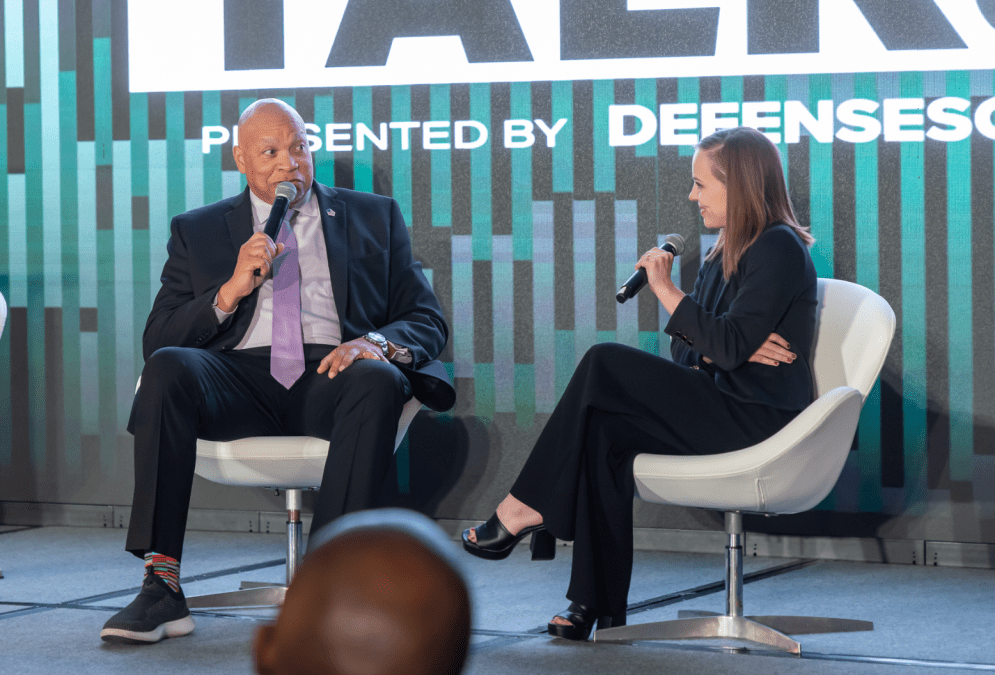

“We are not going to use ChatGPT in its present instantiation. However, large language models have a lot of utility. And we will use these large language models, these generative AI models, based on our data. So they will be tailored with Defense Department data, trained on our data, and then also on our … compute in the cloud and/or on-prem, so that it’s encrypted and we’re able to essentially … analyze its, you know, feedback,” Maynard Holliday, the Pentagon’s deputy CTO for critical technologies, said Thursday at Defense One’s annual Tech Summit.

The department is standing up “AI hubs” that will include DOD labs as well as industry and academia, he noted.

“We have some AI hubs that are going to be ingesting specific data around vision, around electro-optic signatures, so that we can teach our own neural nets. And we’ll work with companies like Microsoft, AWS, together to train systems that are bespoke to DOD and together, you know, test, validate and verify them. So, we’re not going to completely … give an RFP [to industry] and say, ‘Alright, you know, here’s our data — you know, go do it and … come back.’ You know, it’s gonna be a joint process,” Holliday added.

The Pentagon’s quest for generative AI tech is complicated by the fact that it wants “multimodal” capabilities.

“We’ve got to get a handle on our data and … we’ve got to label all of our data. So, the electronic signatures of different platforms, all the vision and all the full-motion video we get — it’s got to get labeled. And then once that’s labeled, then we can put it into generative [AI models]. Because large language models are just one modality. How it’s going to work for us — it’s going to be multimodal. So, it’s gonna have to be language, it’s gonna have to be vision, it’s gonna have to be signals. And that’s all gonna have to be melded for us to use it,” Holliday told DefenseScoop on the sidelines of the conference.

He views the pursuit of generative AI capabilities as a DOD-wide effort akin to Joint All-Domain Command and Control (JADC2), a Pentagon initiative that aims to better connect the U.S. military’s many sensors, shooters and networks through pursuits like the Navy’s Project Overmatch, the Army’s Project Convergence and the Air Force’s Advanced Battle Management System.

“We fight jointly, right? And so it’ll be a joint model with Project Overmatch, Project Convergence and all of those efforts feeding into the model,” Holliday told DefenseScoop. “That’s what it’s going to take.”

DefenseScoop asked Holliday how long it will be before the department has its own DOD-centric large language models.

“I’d say it’s TBD right now,” he said. “Months to years.”

Next week, the Defense Department will be hosting a conference in McLean, Virginia, focused on generative AI. About 250 people from government, industry and academia will be attending, according to Holliday.

“We’ve got to level-set everybody,” he told DefenseScoop. Topics to be explored, he said, include: “What are DOD’s use cases and what’s industry and academia doing — and then what technical gaps can we close to get us to those foundational models?”