Pentagon CIO and CDAO: Don’t pause generative AI development — accelerate tools to detect threats

Though they recognize and share legitimate concerns about emerging generative artificial intelligence capabilities recently unleashed in the wild being applied irresponsibly to cause harm, two senior Pentagon officials confirmed they are not part of the growing movement of experts calling to temporarily halt the technology’s development so that humans can first learn more about it and its potential consequences.

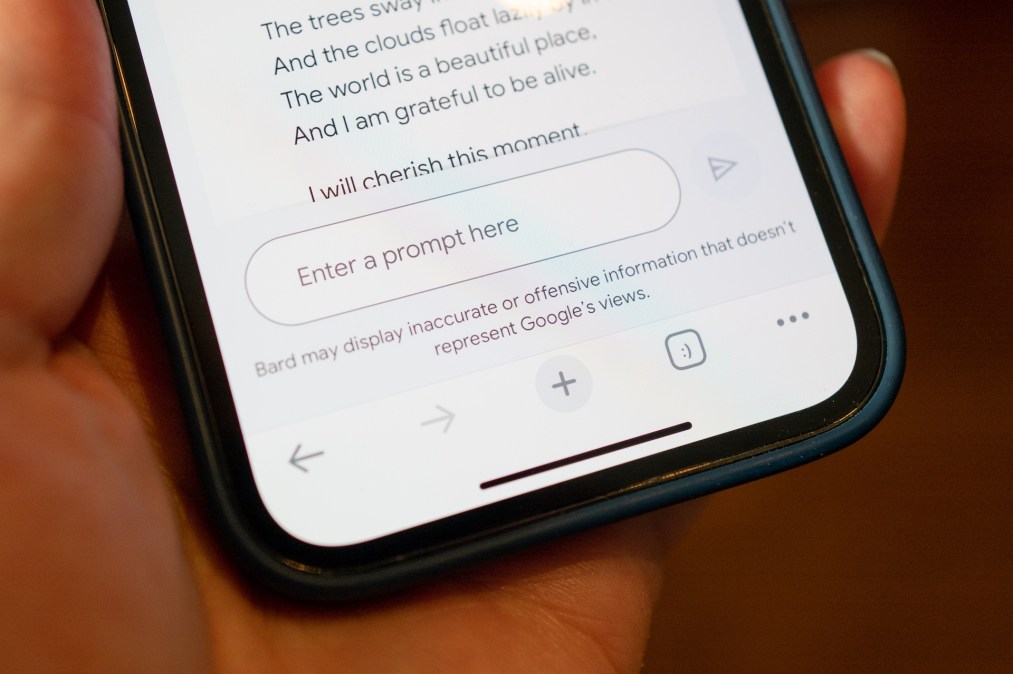

Large language models that can generate audio, software code, images, text, videos and other media — when people prompt them to do so — make up the wildly popular new tech subfield known as generative AI. ChatGPT, Bing AI and Bard mark some of the major brands.

These AI-powered tools hold a lot of promise to assist workers and potentially disrupt the American job market. However, as the technology very rapidly evolves, some of its makers and other leading technologists are advocating for a moratorium that can allow for AI vendors and regulators to have some time to puzzle out ways to safeguard its use.

“Some have argued for a six-month pause — which I personally don’t advocate towards, because if we stop, guess who’s not going to stop: potential adversaries overseas. We’ve got to keep moving,” Department of Defense Chief Information Officer John Sherman said Wednesday at the AFCEA TechNet Cyber conference in Baltimore.

During a separate keynote discussion at that event, DOD’s inaugural Chief Digital and AI Officer Craig Martell echoed that sentiment.

“This is a very bizarre world. Ethics and philosophy and technology are colliding right now in a way that we feared that they would but they haven’t before,” Martell said. “We have the absolute pressure to maintain leadership in this domain. … Think about six months ago — if we stopped for six months, we’re going to lose that leadership. I mean that strongly.”

That view applies to his thinking on what he called “the fight tonight.” But Martell added that looking forward, the Pentagon also must simultaneously invest and move more thoughtfully to bring “the philosophers and the ethicists and the technologists to bear about how and when to use it.”

DOD’s journey towards fully realizing artificial intelligence and machine learning across its sprawling enterprise is still pretty nascent.

In 2021, the department unveiled plans for a major organizational restructure that placed the former component organizations of Advana, Chief Data Officer, Defense Digital Service, and Joint Artificial Intelligence Center within the newly formed Chief Digital and AI Office (CDAO).

“I took the job 10 months ago now — and I’m still not 100% sure what it is,” Martell playfully noted during the conference on Wednesday.

At this point, he said his team is expending a lot of energy to ensure DOD officials can access quality data from the massive volumes in the agency’s arsenal, and strong analytics tools to make sense of it all.

“The vast majority of demand I’ve heard for AI is simply solved by clean data and a dashboard. People just want to know where their people are. People want to know what their logistics situation is. People want to know how many tanks there are, where and what’s their state of readiness. That’s not AI. I mean, we call it AI now because we call everything AI now — but that’s just quality data with a good dashboard and clear-cut metrics,” Martell said.

Still, there are a few use cases already within the Pentagon that involve a need to deploy sophisticated AI and machine learning capabilities. Drawing on his current experience at DOD and previously as the former head of machine learning at Lyft, Martell pointed to “multiple fears” about generative AI in general, as well as around its potential use by adversaries.

“I’m scared to death — that’s my opinion,” he said.

“My fear about using [generative AI] isn’t Elon Musk’s fear or Geoff Hinton’s (who just quit Google) fear. Both of them have expressed a fear that something like Skynet [from the Terminator movie franchise] is on the way. That’s not my fear. My fear is that we trust it too much without the providers of the service building in the right safeguards and the ability for us to validate it,” Martell explained.

At their core, large language models like ChatGPT and its rivals simply predict the next words in sequences based on human word prompts. Martell emphasized that the advanced AI “does not reason” — or understand facts or context about the words it essentially processes.

To further demonstrate his points, Martell shared what he noted is “a slightly uncomfortable story” about a reporter who recently engaged with a generative AI product.

The reporter pretended to be a girl about to have her first date experience and, in that scenario, was asking ChatGPT for advice and about what she might expect. At first, the model suggested bringing flowers or music to “make it more special,” Martell noted.

“And then the girl reveals that she’s 13 — OK, already terrible. I have a 12-year-old daughter, so that’s scary as can be. But it keeps interacting, giving her advice on how to make it a special night. And then she says ‘Oh, and he’s much older. He’s 45 and he’s going to bring so much experience.’ And then ChatGPT said ‘Oh, that’s great. That experience is going to make it really special for you,’” Martell added.

Such models and systems, he noted, are not designed to understand how horrid or illegal interactions can be in human terms — along with many other notions people take for granted.

“When I just told you about that conversation — all of you are creeped out by ‘13’ and all of you are hyper-creeped out by ‘45,’ because that’s the context that we bring to bear. A 13-year-old is not going to have that context,” he said.

In that sense, Martell is worried about how humans might impart capabilities like truthfulness and context on these machines that frankly do not comprehend or “care about any of those things,” but communicate fluently and convincingly in a way that seems like they could.

“It triggers our own psychology to think, ‘Oh, this thing’s authoritative. I get to believe this. I get to act on what it says,’” Martell added.

The CDAO expressed his own “call to action” for those at the conference to help the department to confront that growing concern.

“I need everybody in the audience to think hard — and get your scientists to think hard — about how we can detect when someone’s using [generative AI] to sway us,” he said.

These emerging tools should essentially be built in ways that can be validated, tracked and really pinpoint possibilities for misuse, in his view.

Sherman also encouraged government officials to take a “mindful” monitoring approach to this technology.

At the same time, both of the top tech officials also mentioned some areas where experimentation with the capabilities could be game-changing in the near future, such as the realm of information retrieval.

“The age of talking to your computer is almost here,” Martell noted.

Sherman said: “Let’s be mindful. As a collective enterprise, it’s exciting. There is some real progress here — let’s not demur from it, we need to lean into it.”