Ukraine is ‘extraordinary laboratory’ for military AI, senior DOD official says

Although Russia’s ongoing invasion of Ukraine is a “horrible thing,” it’s allowing the U.S. military to learn valuable lessons about military employment of artificial intelligence, a top Pentagon official told reporters Tuesday.

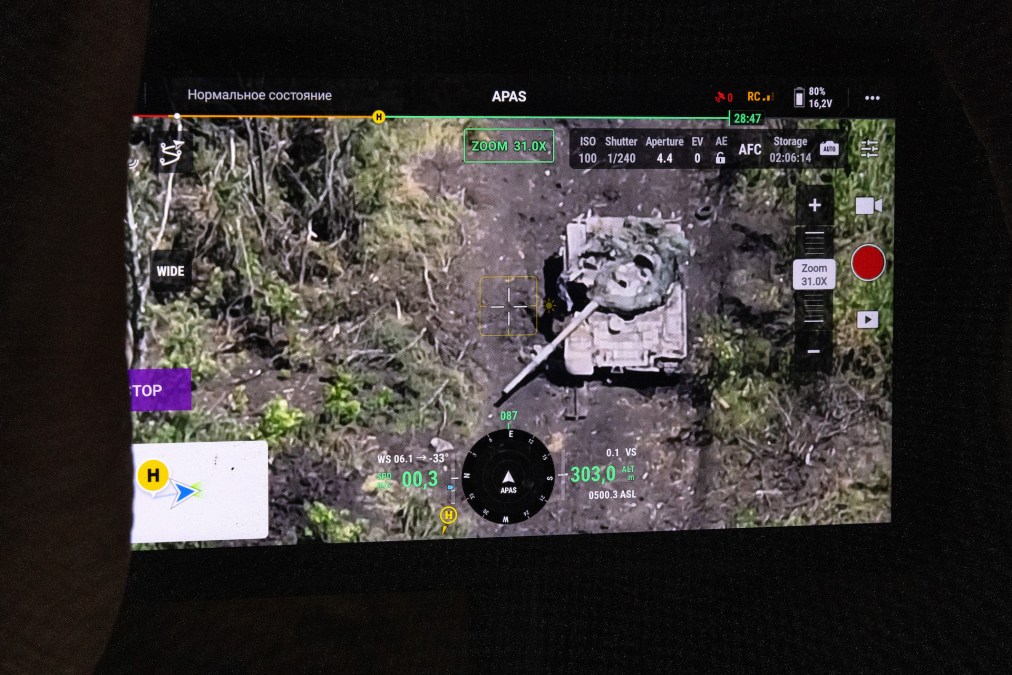

Drones with some semi-autonomous capabilities have played a major role in the conflict. And the Ukrainians are increasingly integrating AI into their systems, as noted in recent news reports.

“There is an extraordinary laboratory for understanding the changing character of war in Russia’s unprovoked aggression on Ukraine. Now, to be clear, it is a horrible thing. That said, it is occurring and we have to try to learn from it … And I can tell you there are really robust efforts across the department to ensure that we figure out what, you know, what we’re learning, how and in what ways does it impact how we understand that changing character of war. We also understand other countries are also learning … I think a piece of that is absolutely the role of drones and also artificial intelligence,” Mara Karlin, who has been serving as the assistant secretary of defense for strategies, plans and capabilities and recently took on the role of acting undersecretary of defense for policy, told reporters at a Defense Writers Group meeting.

Relatively low-cost unmanned systems have appeared in previous conflicts, including the war between Armenia and Azerbaijan that began in 2021, she noted.

“Obviously, now we have seen that a whole lot [in Ukraine]. And that’s really, really notable. On the AI front this is also probably the case study. It’s hard to look at kind of other conflicts from the last few years where we’ve seen it being used in the same way at the same level. And that’s really, I think, pushed a real culture change,” Karlin said.

The Pentagon is taking notes from Ukraine as the U.S. military pursues its own algorithms and AI-enabled platforms, including drones and other tools.

The department is also collaborating with its international partners in this realm. In April, members of the AUKUS alliance — which includes Australia, the United Kingdom and the United States — held their first joint artificial intelligence and autonomy “trial” in the U.K. The event included the deployment of AI-equipped drones in a “collaborative swarm” to test their ability to detect and track simulated targets. It also involved included in-flight retraining of AI models in flight and the sharing of models between the three countries, according to a DOD release.

The Pentagon recently updated its 3000.09 policy that sets up a framework for the development, fielding and employment of autonomous weapons. After the Defense Writers Group meeting on Tuesday, DefenseScoop asked Karlin if any autonomous weapons have already undergone the high-level reviews mandated by the policy.

“I don’t have anything to report on that at this time,” she replied. However, “part of the reason we updated [the policy] was because … we really wanted to ensure we were incentivizing folks to be creative and think under the key policy principles about how and in what ways autonomous capabilities might be relevant, and then go through an actual process” with oversight of their development and fielding.

During the confab with reporters, Karlin noted that the Defense Department has also set up a new Chief Digital and AI Office (CDAO) that reports directly to Pentagon leadership. It’s tasked with helping the department adopt artificial intelligence across the enterprise for a variety of use cases.

“This office … has worked really hard to get folks comfortable with a lot of these things that may feel ephemeral, if you will, to a building that like relies on paper and what have you. And one of the things that they push really hard is making sure you’ve got chief data analytics officers at like all of the different combatant commands as well. And I can’t emphasize enough just how important this is, right. Because you’re trying to literally help the system understand that there is this kind of key approach to how to take in a whole bunch of information from a whole bunch of sources in a really fast way, and then incorporate it into your day-to-day work, right. Because it’s not terribly interesting if we see AI kind of in this still ephemeral way and not really shaping decision-making,” she said.

The Chief Digital and AI Office has also been leading a series of Global Information Dominance Experiments (GIDE) in partnership with the Joint Staff and U.S. military combatant commands around the globe.

“Having the CDAO shop has been really, really important in helping folks, I think, internalize and realize on a daily basis the impact AI can have. And a lot of that is coming down to how you look across a whole bunch of sources and synthesize them and then understand whether it’s on a battlefield, whether it’s thinking about force management, whether it’s thinking about planning, you name it — how do you do that in a more kind of effective and deeply understood way,” Karlin said. “We’ll, I think, have a lot to learn on both of these pieces. But it feels as though kind of the speed of learning has gotten increasingly robust.”