Data and AI: How CDAO is accelerating DOD’s mission

The Pentagon’s Chief Digital and Artificial Intelligence Office (CDAO) expects to release new guidance for how the Department of Defense should develop and adopt data analytics and artificial intelligence capabilities in the coming months to support mission-critical operations. The DOD has placed a strong focus on AI development for several months, and this move from the CDAO clearly aligns with the federal government’s approach in addressing these emerging technologies.

This guidance aims to provide boundaries for organizations within the Pentagon around how to develop and ethically use AI solutions in priority areas, such as cybersecurity, healthcare, financial management, human performance, supply chain visibility and more. However, placing boundary conditions on a technology that presents unlimited potential is an extensive and complicated undertaking.

To effectively accelerate the DOD’s mission, this policy will need to be extremely agile to keep pace with the ongoing technological development — a “skate to where the AI puck will be and not chase it” approach for developing and adopting this technology will ensure flexibility as these capabilities continue to evolve. Likewise, the most important aspect to keep in mind when developing this policy is the role data will play in successful AI deployment. As the currency of the digital age, data must be heavily considered for future success and utilization of AI technology.

The role of data in determining the success of AI solutions

The success of AI is based on trusted data. The depth and breadth of data, its clarity, availability, and trustworthiness all come into play when dealing with these emerging technologies. Most implementations of AI, generative or otherwise, are complex undertakings that rely upon vast datasets and models that must be controlled and protected. If the data is altered, the outcomes in the models will be too.

It’s important for the DOD to double down on the visibility and security of its data while mulling over considerations for upcoming guidance. A modern data architecture that supports large language models (LLMs) culminating in an open data lakehouse is one way to support advanced analytics and inform generative AI. This allows analysts and data experts to more easily access and collaborate on the same data and removes the possibility of vendor lock-in or data movement across tools or the cloud.

Locating, collecting and analyzing data in one secure location is only the first step. Agencies like the DOD which manage and store copious amounts of data, can unlock the most power from data using a hybrid cloud infrastructure.

A hybrid, data-first strategy reduces siloes and data fragmentation, but also bolsters discovery, movement and analytics processes. This cannot be understated — AI efforts will fail without effective data management that includes discovering all data for increased visibility, classifying and dividing among teams, availability and security.

Advancements and drawbacks of AI for DOD’s mission

In this upcoming guidance, the department will clearly identify boundaries for the ethical use of AI in support of the broader mission. AI can be used to accelerate the mission of the DOD, but the policies around it will need to be extremely agile to keep pace with ongoing innovation in the private and academic sectors.

It’s likely that any policy will lag the state of the technology, as the use of it is moving at “light speed” and the policy will likely have been written many months after it’s been put in use. As we’ve seen, AI and data analytics are already being accomplished at an accelerating pace in the department.

In response to this rapid development, the Pentagon recently established Task Force Lima to investigate the implications and benefits of generative AI, further underscoring its commitment to harnessing AI in an ethical manner. Also led by the Pentagon’s CDAO, Task Force Lima seeks to explore the responsible integration of generative AI models by identifying protective measures, ensuring that military use of this technology is aligned with national security.

As DOD leaders begin to develop new policies around AI, it will become clear that the key advancements in harnessing this technology can also cause drawbacks, especially in securing data. When using AI tools, where will all of this info be stored? Will it be accessed publicly? Will keywords of searches be utilized to figure out teams’ plans/strategies?

In order to better protect the nation moving forward, the department must also be prepared for any potential blind spots that can be easily exploited by adversaries.

Using AI tools without compromising security

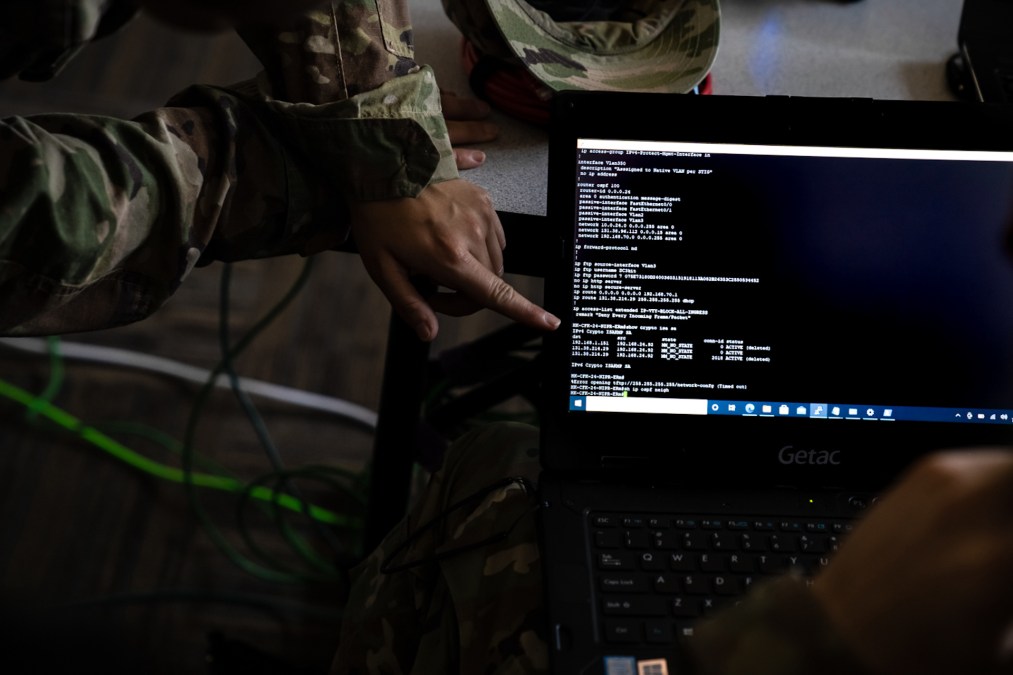

In the world of cybersecurity, AI can automate lower-level defense decision-making and perhaps can be used to detect when AI-based malware is utilized against the military. Conversely, AI can be used to insert malware with greater efficacy than was realized before. The upcoming data analytics policy must consider adversaries’ use of the technology and address that potential threat to national security.

In a recent memo, the White House listed considerations for ensuring safe, secure and trustworthy AI. The memo outlines voluntary commitments for organizations that are developing AI solutions in the interim of official guidance. From a cybersecurity perspective, that means building systems that put security first — these systems should be protected against cyber threats and up-to-standards to reduce critical cyber risks.

AI is a very unpredictable technology that we are just beginning to understand — it will be a difficult task to stay ahead of all potential cyber threats. However, agencies can use best practices for software security, data protection and visibility to take a proactive approach to these emerging technologies.

This includes moving towards a robust zero trust architecture to make certain that data is protected and accessible to the right people, and following protocol according to government standards as new guidance is developed.

Emerging technologies like AI and data analytics present boundless opportunities to improve the mission of the department such as providing healthcare, managing fraud detection, and enhancing cybersecurity and supply chain visibility. As new guidance is released for how the DOD can develop and adopt these capabilities, the department must ensure trusted data remains at the core of any solution to advance the mission and strengthen national security moving forward.

Rob Carey is president of Cloudera Government Solutions. He has held a number of senior positions in industry and at the Defense Department, including serving as the Navy’s chief information officer and the DOD’s principal deputy CIO.