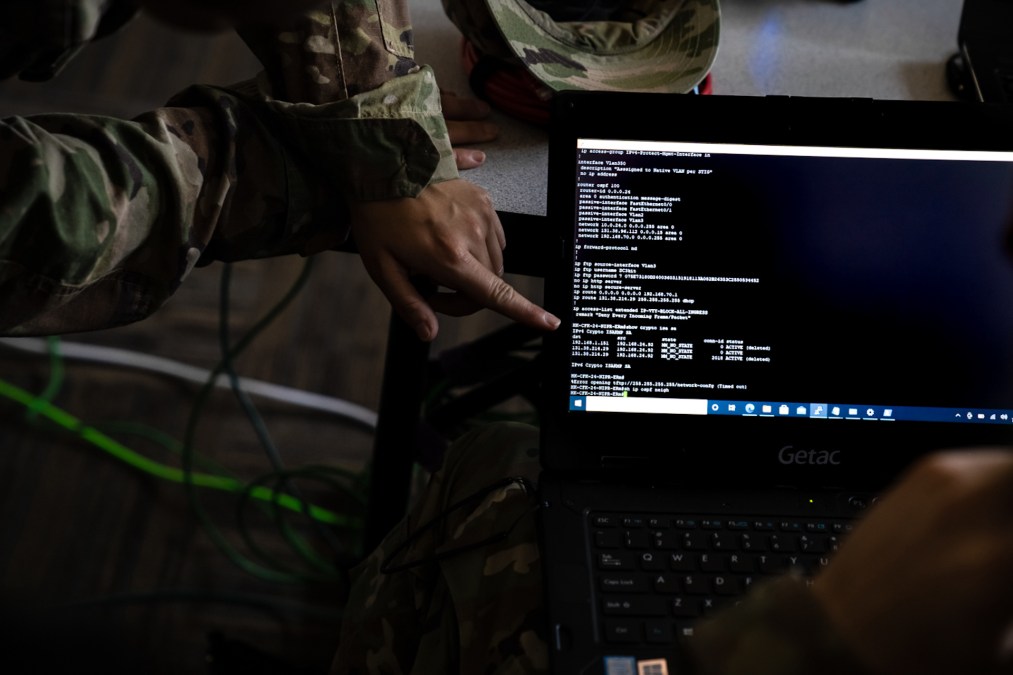

Pentagon testing generative AI in ‘global information dominance’ experiments

The Defense Department is integrating multiple generative AI models into its series of global exercises that are intended to test out capabilities that could support the U.S. military’s Joint All-Domain Command and Control warfighting construct, according to a senior official.

The latest iteration of the so-called Global Information Dominance Experiments (GIDE) is being led by the Pentagon’s Chief Digital and Artificial Intelligence Office in partnership with the Joint Staff. Participants are convening in-person and virtually from the Pentagon and field offices across multiple combatant commands. The wargame kicked off June 5.

“GIDE 6 will leverage our insights from GIDE 5 metrics to further test our capabilities by replicating real-world operational scenarios that enable us to learn and adapt in a controlled experimentation environment,” mission commander Col. Matthew Strohmeyer Strohmeyer said in a statement last month. “We aim to push the boundaries of our current systems and processes in this iteration, leveraging longer experiment duration and expanding collaboration to enhance the success of this and future experiments.”

Generative AI — which has gone viral in recent months with the emergence of ChatGPT and other tools that can generate content, such as text, audio, code, images, videos and other types of media, based on prompts and the data they’re trained on — is being utilized part of the effort, according to Margie Palmieri, the Pentagon’s deputy chief digital and AI officer.

“We are experimenting with different generative AI models, most recently in GIDE. We have about five different models really just to test out, you know, how do they work? Can we train them on DOD data or tune them on DOD data? How do our users interact with them? And then what metrics do we want to come up with based off of what we were seeing to facilitate evaluation of these tools? Because there aren’t really great evaluation metrics for generative AI yet, and … we want to make sure that we have the ability to understand its capability and know when it’s going to be effective on it’s not going to be effective,” she said this week at a RAND Corp. event.

Palmieri didn’t identify the AI models that are being used in the experiments or provide an assessment of how well they’re performing. GIDE 6 is ongoing and isn’t slated to wrap up until July 26. But she suggested an aim is to explore potential various use cases and see how well the technology performs.

“The way we think AI is it really is use-case based. So even in these generative AI models, they’re not the solution for every use case … In DOD, we have computer vision to look for object detection and tracking. We have natural language processing to look through policy documents and, you know, find correlations. We have other types of [machine learning] algorithms for predictive maintenance. So generative AI is no different. There are going to be use cases that it’s really, really good for and there are going to use cases it’s not good for,” Palmieri said.

Officials say that in the future the U.S. military could leverage generative artificial intelligence to enable things like “decision support and superiority,” which is an important element of the Joint All-Domain Command and Control (JADC2) warfighting concept. The goal of JADC2 is to better connect the armed forces’ sensors, shooters and networks and enable better and faster decision-making.

However, Pentagon officials are concerned about the shortcomings of the technology, noting that current commercial products have been known to “hallucinate” and provide inaccurate information.

“What we found is there’s not enough attention being paid to the potential downsides of generative AI, specifically hallucination. This is a huge problem for DOD and it really matters for us and how we apply that. And so we’re looking to work more closely with industry on these types of downsides and not just hand wave them away. So to do that we are experimenting with different generative AI models” in experiments such as GIDE, she said.

Maynard Holliday, the Pentagon’s deputy CTO for critical technologies, has said the Defense Department won’t field commercial-off-the-shelf apps like ChatGPT in their current instantiation. Instead, generative AI technology will need to be tailored to meet U.S. military needs. And the Pentagon plans to work with industry and academia in this regard.

“Large language models have a lot of utility. And we will use these large language models, these generative AI models, based on our data. So they will be tailored with Defense Department data, trained on our data, and then also on our … compute in the cloud and/or on-prem, so that it’s encrypted and we’re able to essentially … analyze its, you know, feedback,” he said last month at Defense One’s annual Tech Summit.

He later told DefenseScoop that the Pentagon wants “multimodal” capabilities that incorporate language, vision and signals.

Holliday also noted a connection between the pursuit of generative AI capabilities and the JADC2 initiatives.

“We fight jointly, right? And so it’ll be a joint model with … all of those efforts feeding into the model,” he told DefenseScoop. “That’s what it’s going to take.”